Squad Health Checks

As a scrum master or agile coach, it’s really important to enable a culture of continuous improvement. One way of doing this is to get the teams you work with into the habit of retrospecting. Ideally, teams are always on the lookout for ways to improve their capability to meet business needs both now and in the future. A key part of agile working is having heartbeat meetings: retrospectives where the team can discuss problems and hindrances, and ways to optimise their approach

There is no single best-practice for gauging the current state and identifying improvements, and an experienced scrum master should know the most appropriate method to use at any one time depending on the various challenges faced by the team.

Methods range from lightweight and unstructured to exhaustive and highly-structured. Examples of lightweight methods include team temperature-taking and simple games such as Sails and Anchors. Temperature taking requires each team member to submit their own personal view on a scale between 1–5, where 1 indicates there are big problems, and a 5 suggests things are going very well indeed. Sails and Anchors asks for submissions around factors that may be impeding the team, and areas that are enabling it. In each of these scenarios, discussions should abound to generate actions which may improve or optimise the situation.

In the first of our Agile Delivery Tech Talks last September, I did a presentation on the Agile Maturity Model that we developed. This was very much a deep-dive exercise intended to discover which areas required the most focus from both management and the squads themselves to ensure we were best meeting the needs of the business.

Agile is a much used and abused term these days, but it’s also something that we pride ourselves on as a business and as software delivery professionals. Many of the external communications used by our recruitment team focus on agility, we employ people based in part on their experience with agile methods and we even have coffee mugs saying “We bet you’ve never worked anywhere as agile”.

What we didn’t have though was a shared understanding of exactly what was meant by this and more importantly, how to make sure we continued improving in a tangible way.

We set about designing the model based on 4 high level themes:

- Foundations, which represent agile hygiene factors such as squad size, process fundamentals and facilitation.

- Delivery Performance, which encompasses our planning and forecasting capability, value focus and data-driven decision-making.

- Culture, which deals with trust & respect, collaboration and teamwork and transparency.

- Leadership, this concerns the presence and engagement of our technical and product leaders, including the maintenance of a well-ordered backlog and visible roadmap, with a shared vision and goals.

Once we had these themes fleshed out into agile factors across 4 maturity levels, we had a very detailed view of the range of possibilities that our delivery squads could fall into. The next step was to run sessions to determine the scores (1–4) for each of these factors, which meant running through all 100 scenarios, to generate the scores.

As you can imagine this was quite a time-consuming exercise and required around 1.5 hours to complete for each squad.

So what did we learn?

We gained a great deal of insight from this exercise. Some squads determined that they didn’t have as much insight into the future vision and goals as they wanted. Others felt that they didn’t receive enough feedback as to the impact of the work that they had delivered. Some squads felt that they were too big, and may benefit from being separated into smaller sub-teams.

We also learned that defining “agile” in itself is a big challenge, contains many overlaps between areas, and since it is such a wide-ranging umbrella term, a maturity model has to be very context-specific.

The main disadvantage we found was that this was a bit of a behemoth of an exercise to keep repeating, and was going to result in long feedback loops around any interventions made. It also didn’t provide an easily digestible output; it was essentially a big spreadsheet with scores added. There was also a built-in assumption that everyone should strive to reach level 4 maturity on all of the factors, which simply isn’t feasible.

We needed something that gave us structure to ensure focus, was lighter weight and more repeatable, and provided a visual output which was easy to interpret across multiple squads.

After a little research we discovered the “Squad Health Check”. This was developed by Spotify, which looked to fit the bill. It is a structured assessment approach for multiple delivery teams, designed for teams to identify trends and areas for improvement. It is highly visual, allowing management to see common pain points. It is also lightweight enough to run more frequently during the team retro.

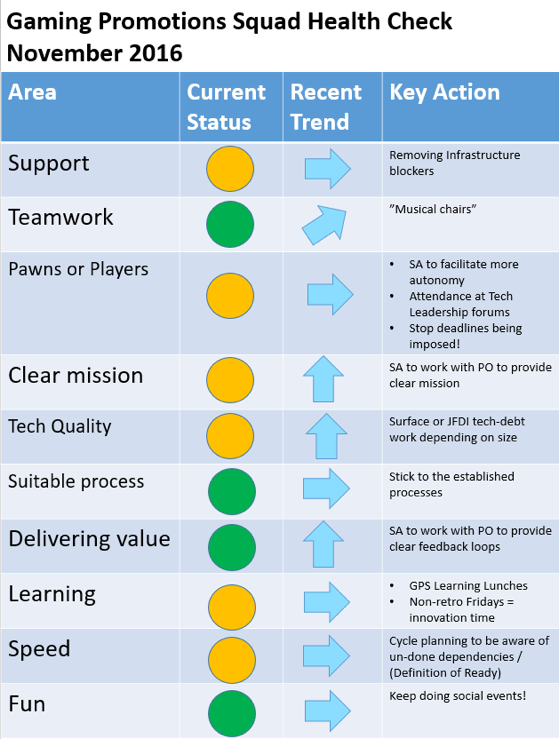

Here is an example of the results of a squad health check for 1 squad. At a glance it is possible to see how happy this team is. There is a good mix of green and amber, and no reds, with no downward trending arrows:

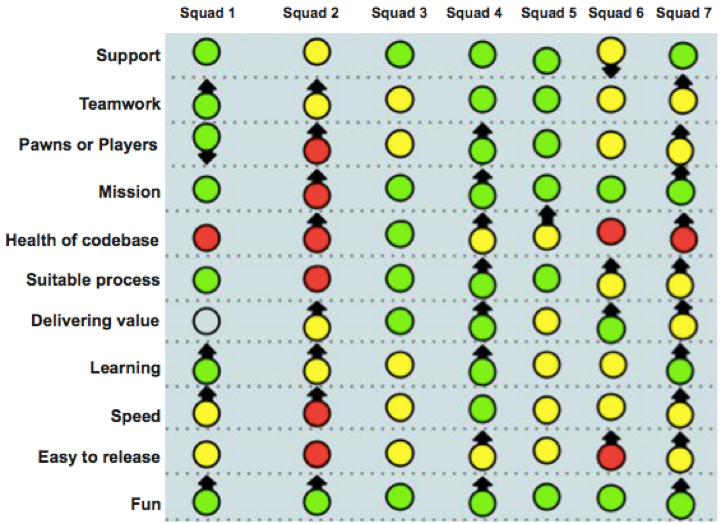

Here is an example output from multiple squads:

- You can scan vertically to see how each team is doing.

- You can scan horizontally to see if there are pain points across multiple squads - indicating systemic problems.

- You can also look at the whole picture to see the balance of red/green/amber, and if there are any worrying trends.

If you are interested in running your own Squad Health Check, here’s a step-by-step approach:

- Agree the various “perspectives” you would like to rate yourselves on. The standard out-of-the-box Spotify ones are shown in the image above.

- Agree your definitions of “Happy” and “Sad” for each of these, to aid discussions.

- Take Red/Amber/Green cards for each squad member to the assessment session.

- Run the session – get each team member to play their hand at the same time, to avoid groupthink.

- Capture the trends and the agreed actions for each area

- Produce an output similar to that above and make it visible in your working area, preferably near your team Kanban board.

- If there are multiple teams, produce a combined output and discuss the output with senior management, to engage them in providing support where it is needed.

- Implement the actions and re-run the session. We went for quarterly re-runs.

Having run this with a couple of squads recently, we are now going to take the next step and run it across all remaining squads in the Gaming Tribe. We found this method sits in the “Goldilocks zone” of just the right level of structure and visual output, while being lightweight enough to encourage the right length feedback loops.

One word of caution if you are thinking about undertaking this exercise, is that you need to have a supportive and blame-free culture in place. If there is a prevailing atmosphere of always being held to account for any problems that occur regardless of the cause, this isn’t going to be a good fit. Teams will be reticent to rate themselves poorly on anything and you will instantly lose any opportunity to identify areas for improvement. Try and work on educating stakeholders in continuous improvement and embedding a transparent and understanding culture.

I hope you feel motivated to run this at your place of work soon – good luck on your agile journey!